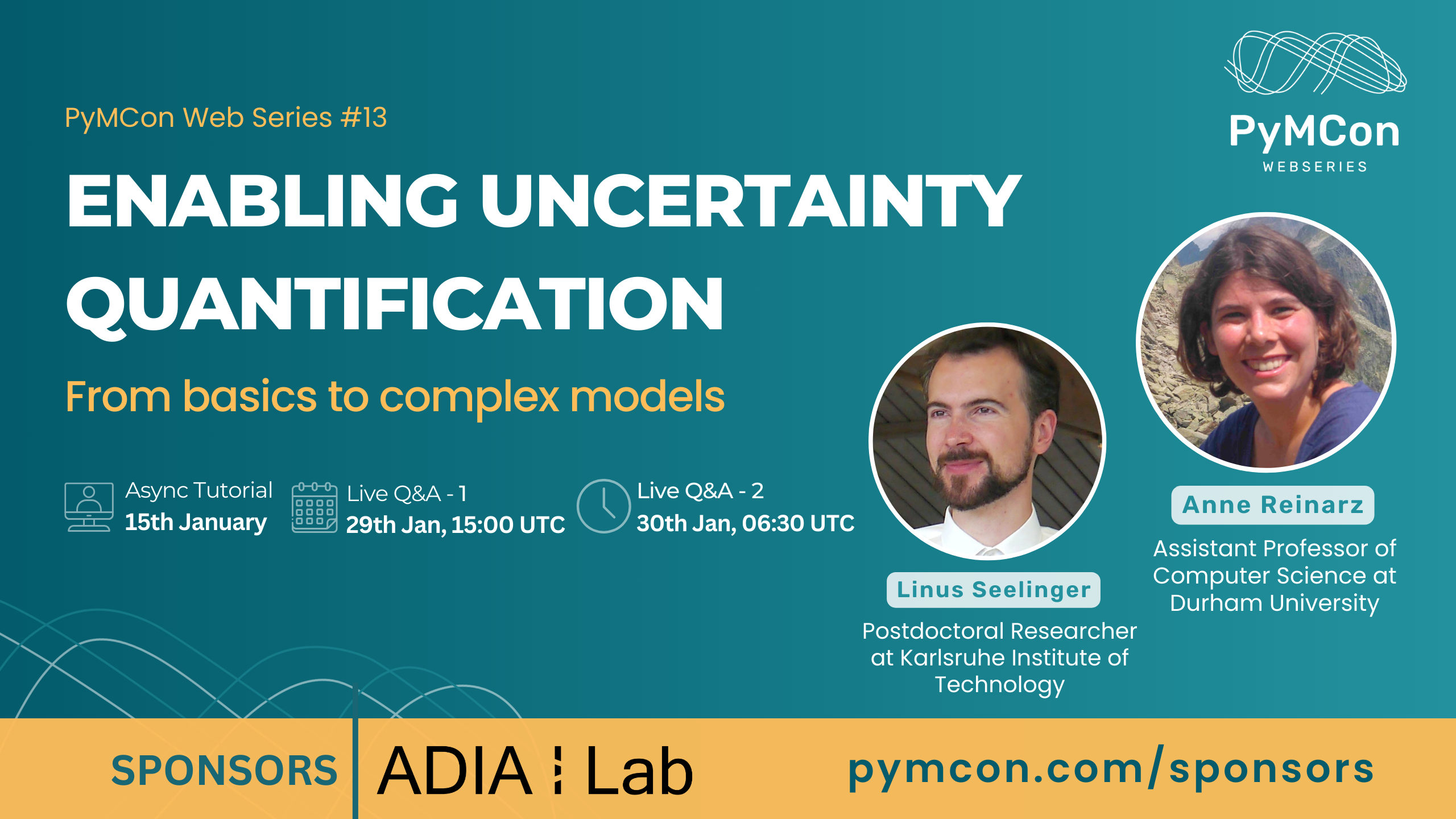

Tuesday, January 30, 2024 at 6:30 UTC (12:00 PM IST)

Enabling Uncertainty Quantification

From basics to complex modelsOnline

Treating uncertainties is essential in the design of safe aircraft, medical decision making, and many other fields. UM-Bridge enables straightforward uncertainty quantification (UQ) on advanced models by removing technical barriers.

Complex numerical models often consist of large code bases that are difficult to integrate with UQ packages such as PyMC, holding back many interesting applications. UM-Bridge is a universal interface for linking UQ and models, greatly accelerating development from prototype to high-performance computing.

This hands-on tutorial teaches participants how to build UQ applications using PyMC and UM-Bridge. We cover a range of practical exercises ranging from basic toy examples all the way to controlling parallelized models on a live cloud cluster. Beyond that, we encourage participants to bring their own methods and problems.

Anne Reinarz & Linus Seelinger

Anne Reinarz & Linus Seelinger

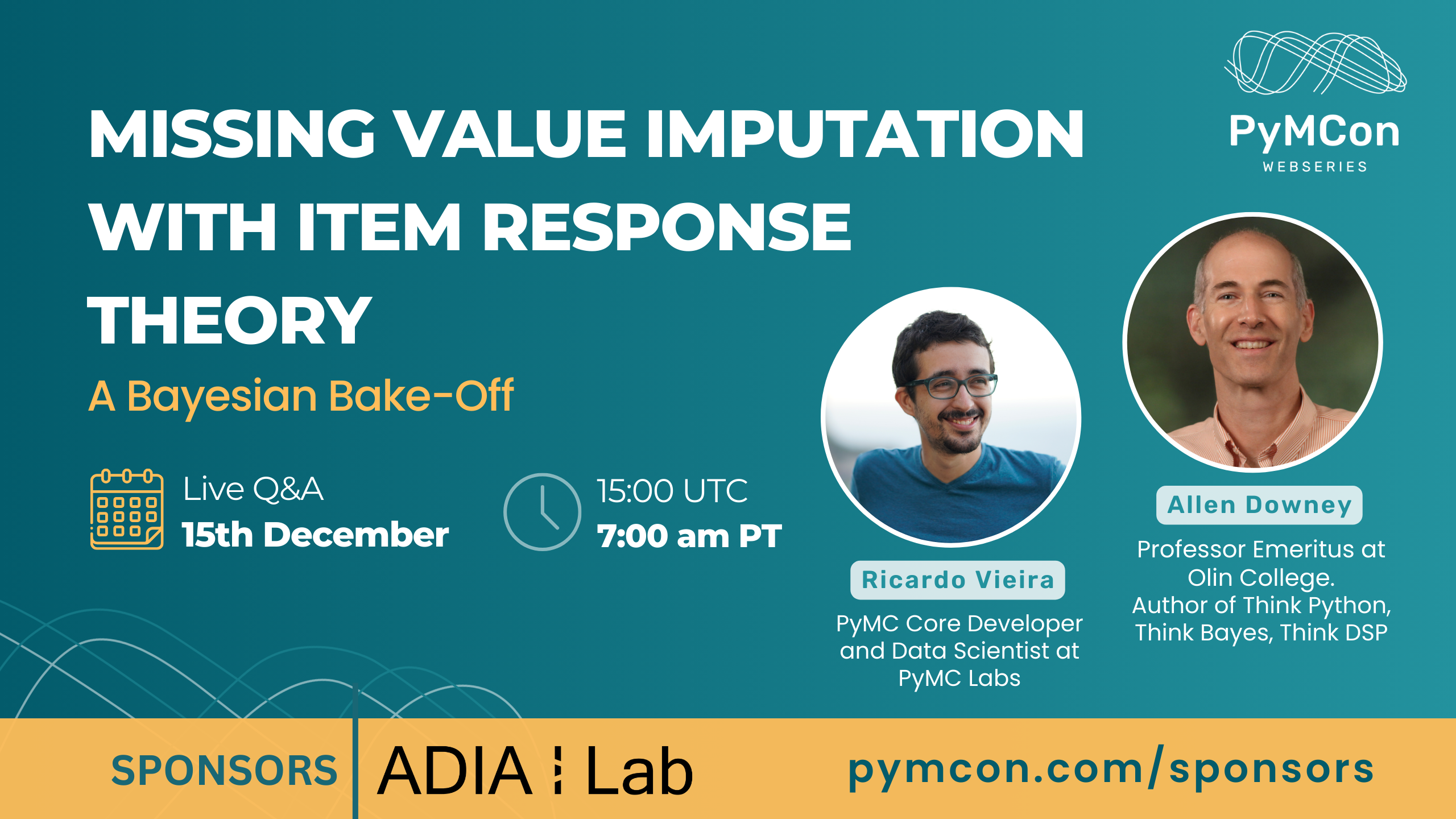

Allen Downey & Ricardo Vieira

Allen Downey & Ricardo Vieira

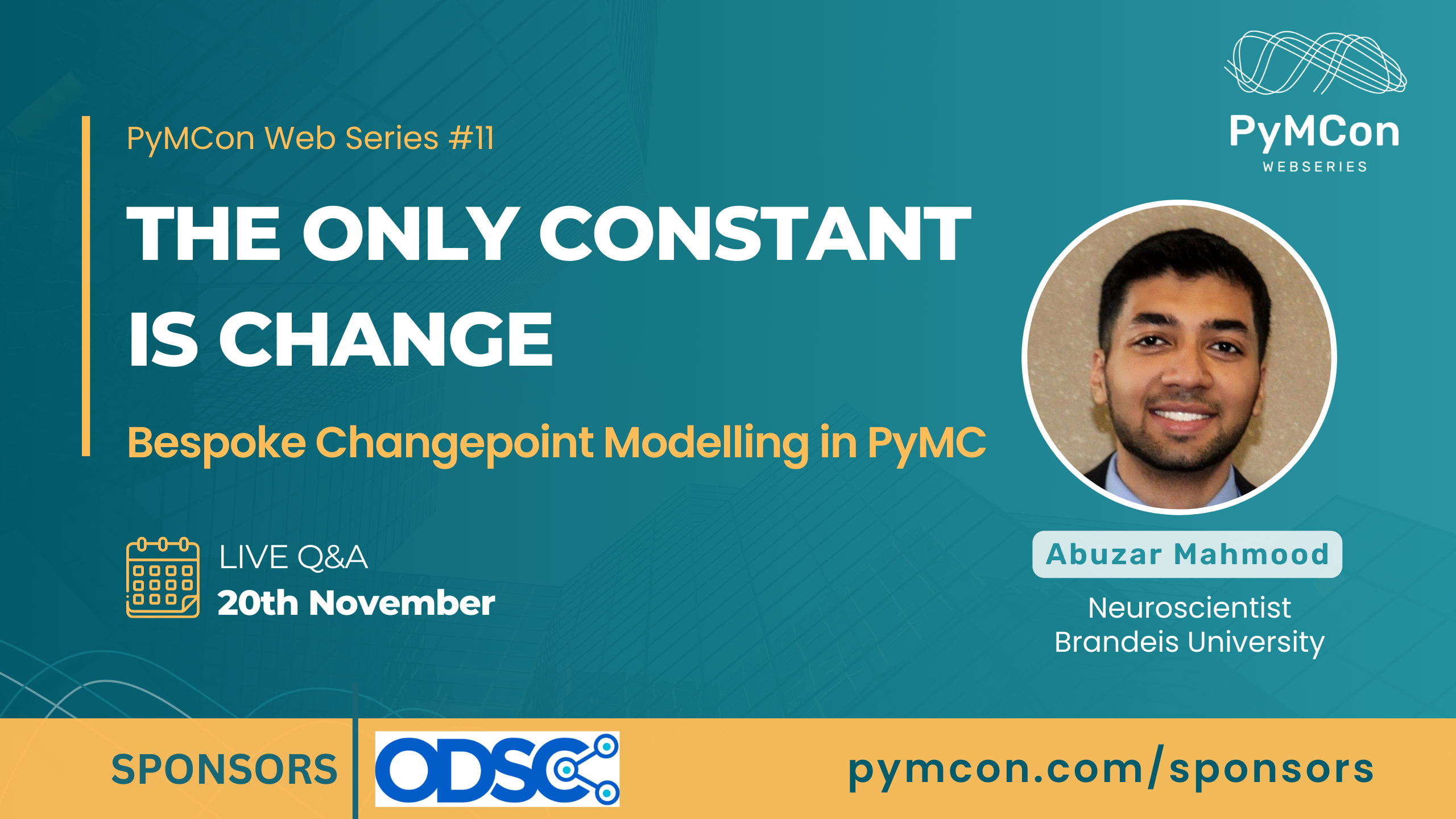

Abuzar Mahmood

Abuzar Mahmood